Teach Them to Fish

Designing friction back into learning before we engineer it out of childhood entirely

Erm, quickly, some hand-to-heart disclaimers: I am a Claude power user; I believe LLMs are force multipliers on creativity; I would mourn losing all the context of my past chats. So: pro-AI, no fearmongering ahead. Human progress is never at odds with technological progress. In fact, it is vital.

With that said, I’m worried about the children. When I think about the future of AI, which is almost certainly one of deeper integration rather than altogether discard, I think about being a child.

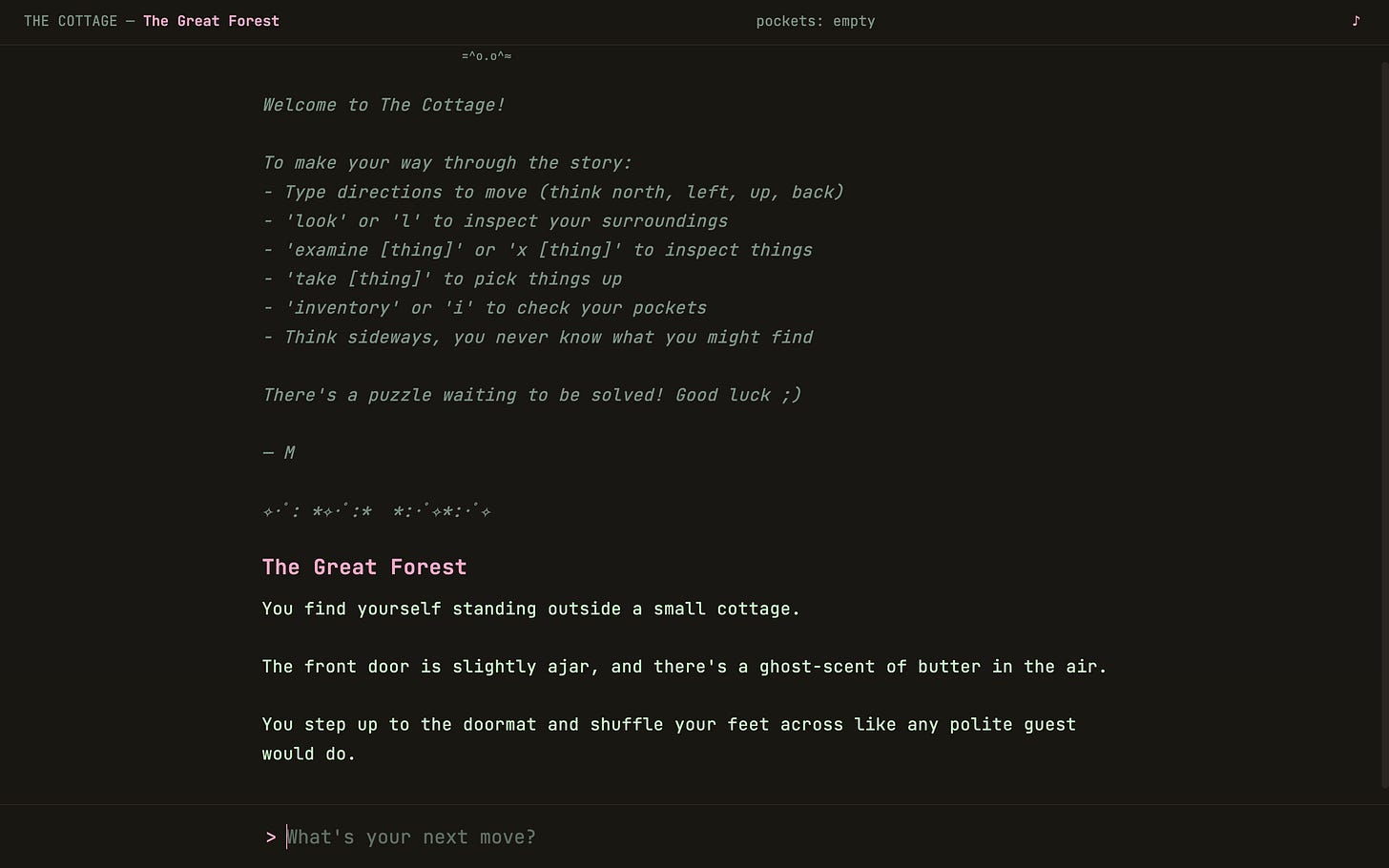

Earlier this year, I created an interactive fiction game (or text adventure, a genre from the late ‘70s). I used to play these games all the time in middle school without realising how formative they were. The game interface is a tiny language parser. All you get is plain prose and a command line which takes freeform text input, matches against a pre-defined vocabulary of verbs and nouns, and maps to a state change. You type out what you want to do with a constrained natural language: basic verb-noun inputs such as “go North” or “take penlight”. The gameplay options are finite but still rewarding.

Interactive fiction teaches children to pay attention, read slowly, and keep a mental map of the landscape as well as the inventory of nouns which the game would recognise. You have to navigate what may be possible, articulate it, and repeat until something happens. These games teach a specific kind of thinking which requires players to communicate with a system on its terms.

A blank prompt accompanied by a blinking cursor is doing something valuable that we’ve largely abandoned with the advent of LLMs. We’ve gotten very good at making interfaces frictionless, such that we often and unthinkingly become consumers of someone else’s choices, navigating a decision tree someone else built. Let’s talk about what we stand to lose when learning stops being hard.

I am not a parent, nor an educationalist. But I am a person who was a child once; is now a somewhat-victim of endless scroll and at risk of AI psychosis [sic]; and hopes to have children someday who can benefit from a rich and expansive pedagogy.

The beauty of being a child is that you are shaping the world to your view, although not consciously. Everything you know is built upon like Lego: reshaped, broken apart, and built up again constantly as you take on every microdetail of a world you’ve never before encountered.

Crucially, childhood (which I’ll define as school-age for simplicity, i.e., kindergarten through to high school) is a time for puzzle-solving. You learn arithmetic even though you will use a calculator for most things after graduating because numerical literacy is about logical thinking. You structure out an essay paragraph in the point-example-analysis format so that you can understand the pieces required to make a critically-developed argument, although you’re unlikely to make a case for anything quite so formally in a 9–5.

Educational technology, large market though it already is, is bound to boom bigger. We know that kids (through no fault of their own) are delegating their homework to ChatGPT, outsourcing work without parsing the content, and relying on voice-to-text instead of spelling. Will we forget how to read and write and reason if we’re not intentional about how we engineer AI EdTech for the classroom? Yes, probably.

We also know that an overreliance on AI could (I mean, definitely does, but science) cause a potential deterioration of essential cognitive abilities. An adult today has the capacity to choose and deliberate over how they engage with LLMs, albeit this is no easy feat for many. A child, however, especially one who has not yet gained the skill of unassisted reasoning, is a civilisational problem. Very different stakes. How do we teach children to notice when a system is thinking for them, and to have a felt sense of the difference between their own reasoning and a highly-assisted output?

Superpowered teaching is distinct from superpowered learning. A teacher’s job is not to know everything or even to teach comprehensively, but rather to impart knowledge in a generous and contestable way. Newer LLMs are more likely to push back on absolutism, but I often get the sense that they’re more inclined to do so when they can tell you’ll be receptive, which is not a natural instinct, and certainly not one for a child.

A healthy dose of skepticism is a prerequisite for thinking well. We understand through living and being and love that truth is not binary. As Atkins writes, “in learning how to listen [...], we learn that listening is not the same as agreement, that suspicion must be tempered by charity, that truth is rarely unmediated but always emerges through the cracks of unreliable speech”. Teaching to push back, or to think of what the other side could think, or what truths may be only half- or even lesser-truths is how we get closer to solving problems. It’s a form of creativity core to our humanity. We learn how to create through finding our way towards the asymptote of our understanding of the world, which cannot be rushed.

I particularly enjoyed Surya’s argument that much of what gets the most hype is: “speed as its own justification,” when in fact, “in slower ways of working, reality has a way of intruding whether you wanted to or not,” and with AI-assisted work:

“the iteration happens in a closed loop. The only feedback comes from the machine, and the machine will build whatever you ask without questioning. [...] Nothing pushes back. So you keep pushing. Faster. Further. Hurling yourself in whatever direction you happened to be facing when you started.”

Of course, prioritising efficiency has its place. That place is in thousands-strong enterprises; tiny, fast-moving teams; even the pinned chat I have of all my favourite meals and the weekly grocery restock list I generate from it. Intentional usage of AI is still important, and I like to believe that most of us are equipped with enough honesty and grit to acknowledge the value in doing the foundational thinking ourselves, then outsourcing the grunt work.

Another place AI absolutely belongs is in childhood development. Controversial though it may be, there is no understating the value in plushies that speak back to children in households that don’t meet some of their fundamental wellbeing needs. The same goes for EdTech which offers one-on-one learning for students in underserved learning environments. The question isn’t at all one of whether or not, it’s about how it’s designed and towards what end.

Many of our interactions with AI follow the same pattern: the AI is generating, and we are often simply receiving. Receiving is not learning. Give a man a fish or teach him how to use a fishing rod and all that. Julian Birkinshaw argues that learning necessitates friction through error, misunderstanding, complexity, and disagreement:

“learning is supposed to be a struggle [...] There are also strong academic traditions built on this notion. For example, the renowned learning theorist John Dewey said, “the origin of thinking is some perplexity, confusion or doubt”. His point was we don’t learn just by ingesting information, we learn by trying to make sense of something out of the ordinary and relating it back to what we already know. That is the process whereby we construct knowledge and understanding.”

Productive struggle in education refers to students approaching complex skills above their current skill or understanding level, thus creating the circumstances for deeper learning and improved (academic and other) resilience. In the same way that progressive overload in weightlifting builds muscle by way of adding greater and previously unmanageable stimuli over time, adding friction into learning allows for students to think critically and become more confident thinkers.

AI in education should therefore be designed to withhold completion rather than optimise for it. Assessed work is already shifting: more drafts, more showing of work, more process. This is harder for already-overworked educators, but necessary. On a technological level, a solution could look like increasing restraints by making chat-based interfaces that stop at, say, 30% of their reasoning - enough to guide direction, but not enough to complete the thinking. It would require reverse engineering everything we know about enterprise-focused product development so that we can inject friction at every efficient inflection point.

In practice, schools have caught on to how AI can save teachers time on admin, but the current infrastructure is ahead of the governance that educational institutions are scrambling to align on. Of similar importance, frontier labs are actively working on their approaches to education. OpenAI and Anthropic have been heavily funding advanced research on AI and learning, as well as hiring Heads of Education and working closely with schools and educators. The right people are part of the conversation! At the same time, building for learning is a somewhat different hurdle to optimising primarily for safety, usability, and adoption.

For example, OpenAI’s Study Mode, which guides instead of spoonfeeds, is a great start. Nonetheless, it’s an opt-in feature, which means students have to choose the harder path over the easier one, every time. I don’t imagine I would have had the willpower to opt-in every time as a sixteen-year-old on a deadline. It’s akin to expecting avid snackers to stop at one Dorito when they’ve been specifically engineered for addiction, or to easily turn off their phone in a timely fashion since the invention of the endless scroll.

The machine, as the default, should bear the brunt of knowing when to push back, refuse, and soften at the right time and for the right reasons, as is the hallmark of good pedagogy.

Michael Inzlicht puts it this way:

“The goal should be to harness AI’s benefits while preserving the friction that makes us human, [...] struggle teaches us, loneliness connects us, and effort gives our achievements meaning. In rushing toward a frictionless future, we must be careful not to smooth away the very experiences that contribute to a meaningful life.”

Ease makes things hard in the long term; difficulty is best won through sludge. We’re playing the long game.